Published on March 19, 2026

Can you imagine when was the first sci-fi movie released!? Almost before a century in 1927 when the German expressionist silent film ‘Metropolis’ directed by Fritz Lang was released! The movie is a story of an AI based humanoid robot getting ready to celebrate its centennial! Then the first hit sci-fi released was ‘The Terminator’ in 1984 by James Cameron which was followed by its super hit series. Same was repeated for the Marvel and Star Wars series as well.

As the ‘artificial’ intelligence is deeply rooted in human’s nerves since a century, I thought of summarizing 7 distinct phases of Artificial Intelligence evolutions with simplified definitions, examples, and limitations.

Let’s take a look:

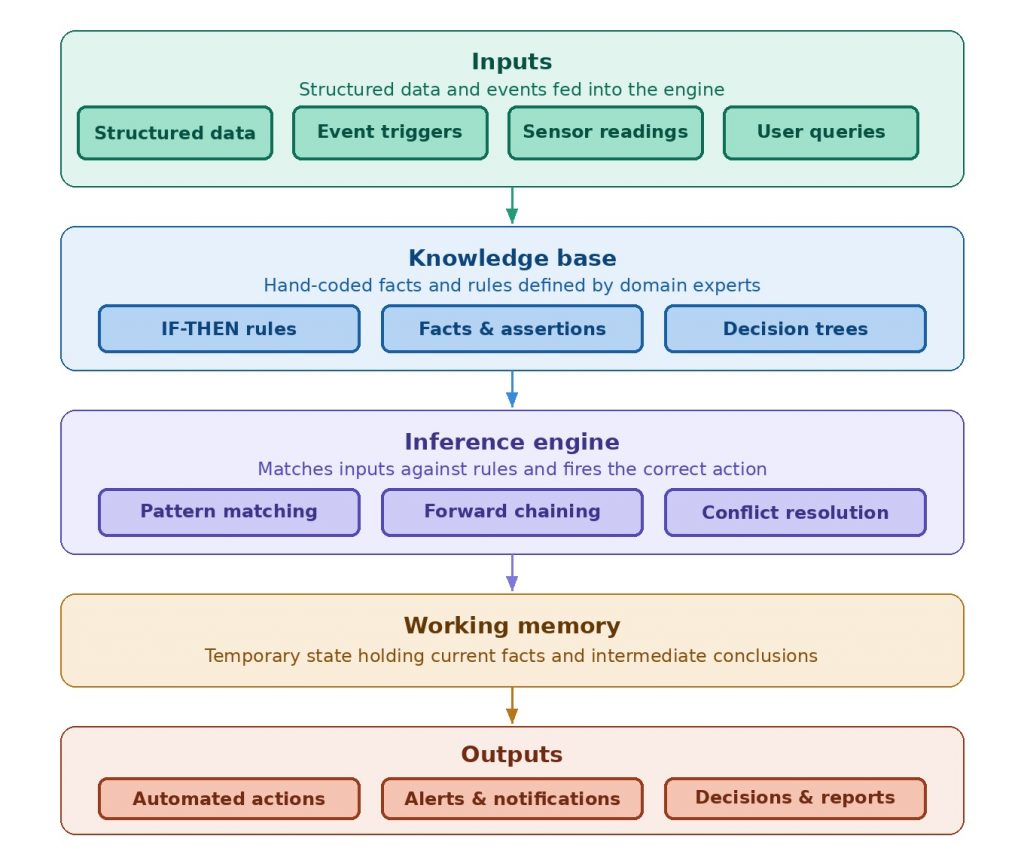

Stage 1: Rule Based Automation Engine (1970s to 1990s)

💎 Follows hand coded pre-defined static rules-based logic for going from point ‘A’ to ‘B’ or taking a decision at given point as written in code

🎯 E.g. Early chatbots like ELIZA by Joseph Weizenbaum at MIT, initial version of Apple’s conversational assistant Siri, if-then-else based business rule engines.

⛔ Limitations:

- Does not learn or adopt

- System breaks If undefined scenario occurs

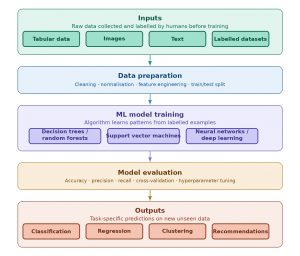

Stage 2: Machine Learning / AI (2000s – 2015s)

💎 Powerful algorithms learn patterns from data and not through rules.

Generates data driven predications and classifications

🎯 E.g. Netflix early version making suggestions based on viewers choices and browsing history, purchasing suggestions based on purchase history, early fraud detection systems.

⛔ Limitations:

- Fails in case of incomplete, unhealthy, or poor data quality

- Narrow model because of one task per model limitation

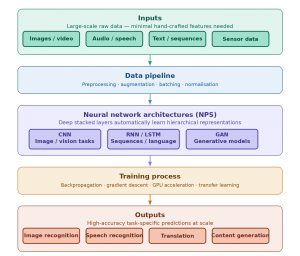

Stage 3: Deep Learning (2012s – 2020s)

💎 Deep learning systems are perceptions based and the key differentiator from machine learning stage 2 is that they automatically learn representations through data pipelines and narrow Neural Network System (NPS) architecture (and should not be confused with LLMs).

Generative AI originated during this era but was very limited and niche.

🎯 E.g. Image recognition like Face ID phone unlocking and tumor detection in X-rays/MRIs, lane detection, STT (speech to text), OCR.

⛔ Limitations:

- Holds only task specific data, not flexible, retraining required.

- They are still task specific where one model is required per use case or problem.

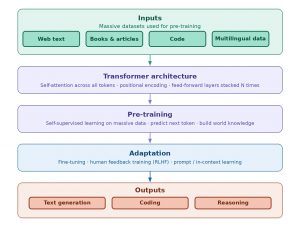

Stage 4: Foundation Models & Transformers (2018s – Present)

💎 This is the real birth moment for democratized and scaled Generative AI or creating contents!

So, if deep learning (stage 3) era was a powerful engine in a lab, foundation models and transformers (stage 4) era is about putting that engine in car along with other parts so everyone can drive!

Transformers are a neural network architecture that transforms or changes an input sequence into an output sequence by learning context and tracking relationships between the sequence components. Foundational models include models trained on massive data sets – not only of text data types (like LLMs) but also other types like images, audio, video, or combination of these.

What defines foundational models are their large-scale pretraining and general-purpose adaptability and not modality. Transformers architecture set foundation of stage 5 multimodal AI systems invented in 2022.

Through this era, Generative AI became accessible, conversational, reusable, and adopted in mainstream. AI from backend moved to UI surfacing user products. One model started holding multiple types of contents.

Foundation models can be called the brain engine that enables autonomous AI agents’ development. They have capabilities to integrate with users’ database and API through Retrieval-Augmented-Generation (RAG) technique which makes them specialized transformational tools.

Industry wide explosion occurred through this phase: in content creation, design, coding, quality, pipelines, and entire SDLC lifecycle!

🎯 E.g. GPT-4 foundation model and ChatGPT, BERT, Copilot

⛔ Limitations:

- Hallucinates confidently.

- Lacks real time awareness as do not have real-time updates.

- Limited memory and context window so limits tokens input size.

- Can inherit biases and produce harmful or unsafe contents if fine-tuning and guardrails not applied or not regularly monitored.

- High chances of failing in complex planning or edge-case math.

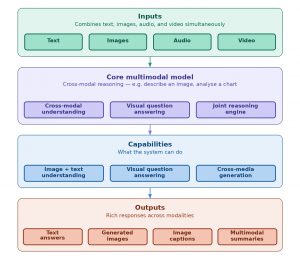

Stage 5: Multimodal AI systems (2022s – Present)

💎 Where most of the foundational models are unimodal and trained on massive data to create or analyze contents; the multimodal era processes multiple data types simultaneously and allows cross-signal reasoning as well – text-to-speech, text-to-image or video etc.

What differentiates multimodality is making human like connections, being intuitive, sharing perspective, making decisions, and doing research through multimodal agents’ creation.

🎯 E.g. Open AI’s GPT-4o, Google’s Gemini & Meta’s ImageBind models.

Code generation, automated test-suits creation, infrastructure-as-code agents in DevOps and CI/CD pipeline, debugging and fixing.

Read patient notes and examine X-rays at once and diagnose, check camera feeds and GPS and take decisions based on code written to drive autonomous cars etc.

⛔ Limitations:

- High cost due to high and complex computational and infrastructural need.

- Complex data integrations with different data types can break entire agent.

- Complex in interpretability, explainability, and handling missing or noisy modalities.

- High latency for complex real-time data processing (e.g. video).

- Enhanced vulnerability to security threats.

- Inherit bias.

- Privacy and security threats.

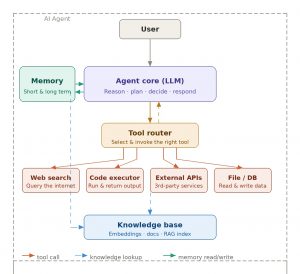

Stage 6: Tools integrated AI Agent (2023s and Emerging)

💎 This current era of autonomous agents is capable not only to respond, but also to plan, use tools, integrate with external customized systems and databases, execute workflows, take decisions and solve problems.

AI Agents Think → Act → Observe → Learn.

The AI agent takes prompts inputs and personalized knowledge sources to pass this personal contextualized information to LLMs enabling LLMs to next level. It also integrates with organizational APIs, databases, file systems, and code. It can also have memory for building an intelligent system and taking contextual decisions. They also allow debugging every step which was not possible until the non-agent era.

AI agents work very well for simple Q&A or brainstorming, low-stake decisions, in case of quick iteration and traceable reasoning is not required, stakeholder review is not required.

🎯 E.g. Content generation agents, travel assistant agents planning user’s trip based on preferences, research agents, autonomous coding agents Claude 3.5 Sonnet or Devin AI

⛔ Limitations:

- One brain LLM doing too many jobs which introduces cognitive overload and reduces accuracy.

- Due to single generic agent, domain centric specialization is deficient.

- Serial processing due to single agent slows down execution.

- Single agent can be a single point of failure in case of error or hallucination.

- Complex problem will face scalability challenges.

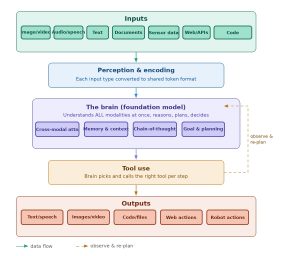

Stage 7: Multimodal Autonomous AI systems (2025s and Emerging)

💎 New generation of fully multimodal, highly autonomous AI systems operates not only in digital (web browsers and GUIs) but also in physical (robotics) world closely mimicking human cognitive processes.

Multiple specialized agents coordinate in orchestration by combining Large Language Models (LLMs) with Voice or Vision (VLMs) and action-oriented tools.

Multimodal AI agents are loop: They Sense → Think → Act → Observe → Learn → Think again until completion.

Multimodal agentic autonomous systems have capabilities of contextual understanding, real-time interactions, error recovery, and reduced human interventions;

They perform very well for complex decisions with tradeoffs, high stakes (cost, privacy, reliability), need audit and traceability, stakeholder review is required, production-grade quality is required.

🎯 E.g. Autonomous vehicles Waymo (LiDAR) and Tesla (cameras), Amazon warehouse automation, Siemens industrial manufacturing and predictive maintenance etc.

⛔ Limitations:

- High computational and infrastructure cost due to massive computations.

- Hallucinations.

- Error compounding.

- Security threats.

Industrial automation I4’s engineering concept ‘Artificial Intelligence’ is redefining the way the humans live! It has already hit like tsunami waves and bigger and better yet to come!

<Note: Component architecture diagrams are generated through Anthropic Claude Sonnet 4.6.>